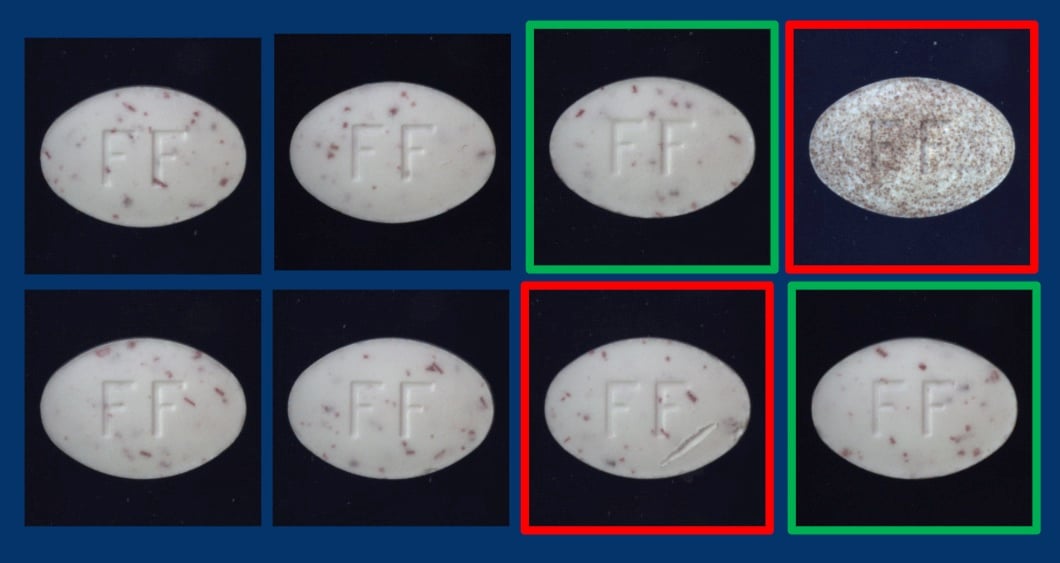

The quality of your AI model – and thus the accuracy of your vision system – is directly linked to the quality of the images and the datasets themself used to train the AI model.

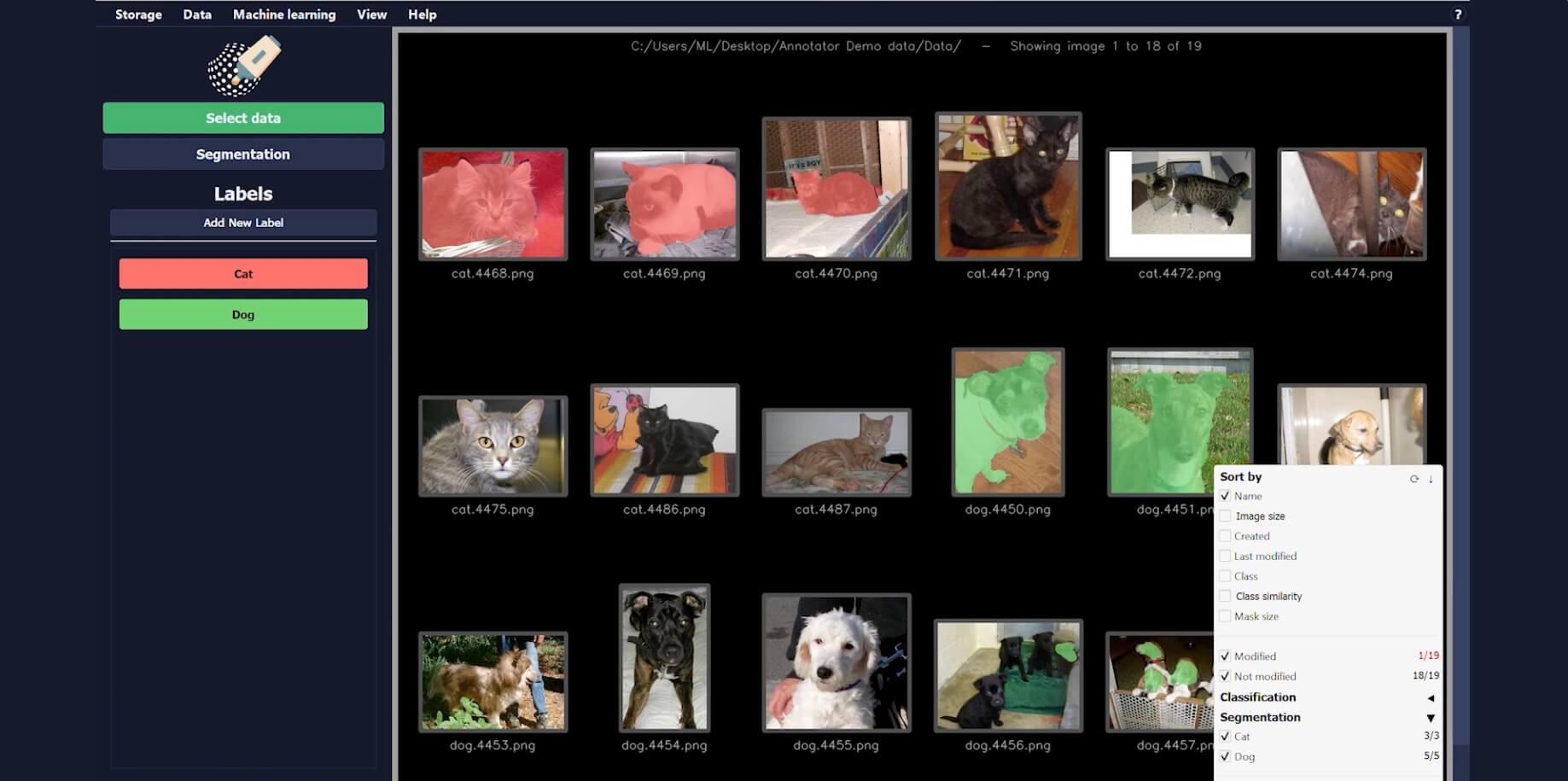

The key to success in this context is accurate and consistent annotation – that is, the classification and segmentation of defects in images – and it is exactly this task that JLI’s annotation tool, Annotator, helps with.

Annotator has just (April 2026) been released in a new version, version 5, which focuses on making the entire annotation process as easy as possible. User-friendliness is prioritized through tutorials, in-app documentation, and assistive tools, ensuring that everyone contributing to annotation can do so in the same systematic manner.

A collaborative approach

With Annotator, JLI vision’s customers can choose to handle as much of the annotation work themselves as they like. While annotation can be time‑consuming and costly at scale, it is also a decisive factor in the quality of the AI model and the performance of the final vision system. If the dataset is created entirely without our involvement, we cannot verify its consistency — and therefore cannot guarantee the model’s performance.

For that reason, we always recommend a collaborative approach: JLI Vision’s experts support the annotation process at the beginning of a project to establish quality and clear guidelines, especially for segmentation. As the project progresses, the customer’s operators can gradually take over more of the work with confidence.

In addition to improved user-friendliness and guidance on annotation techniques and best practices, the new version of Annotator also offers a range of tools for advanced statistics and analysis of the dataset’s content and quality.

You can watch an introduction to Annotator in the video below, “Getting Started,” as well as watch videos on classification, segmentation, and bounding boxes on our YouTube channel.

.jpeg)